Sematic is an open-source ML development platform. It lets ML Engineers and Data Scientists write arbitrarily complex end-to-end pipelines with simple Python and execute them on their local machine, in a cloud VM, or on a Kubernetes cluster to leverage cloud resources.

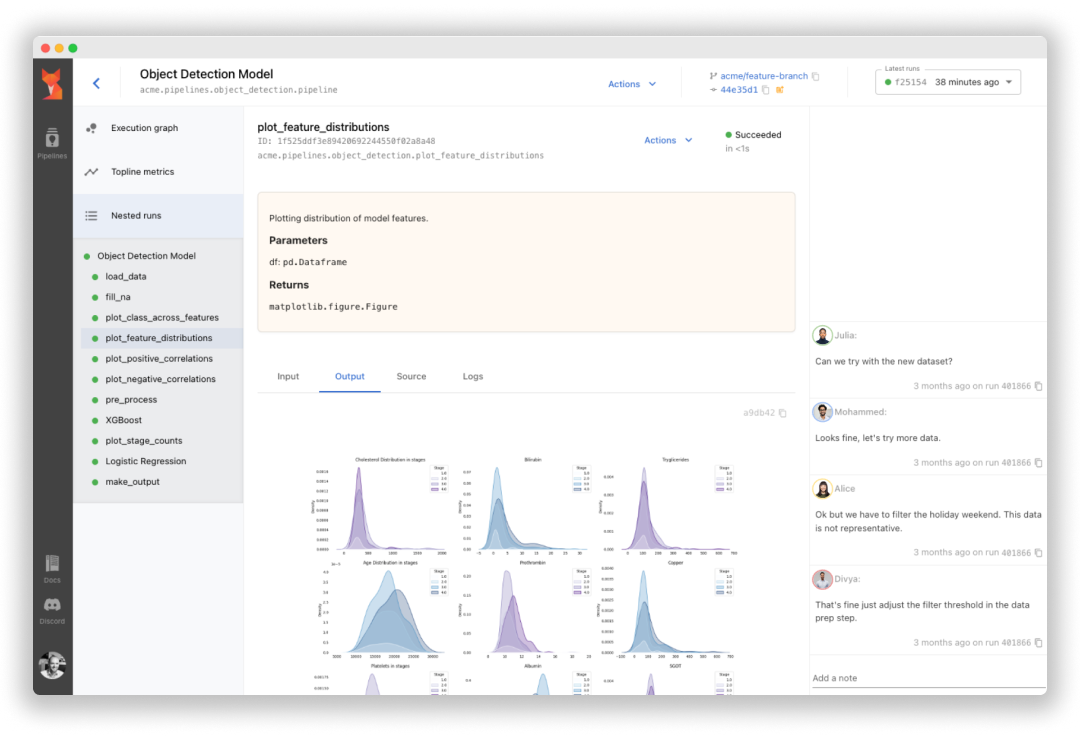

Sematic is based on learnings gathered at top self-driving car companies. It enables chaining data processing jobs (e.g. Apache Spark) with model training (e.g. PyTorch, Tensorflow), or any other arbitrary Python business logic into type-safe, traceable, reproducible end-to-end pipelines that can be monitored and visualized in a modern web dashboard.

Read our documentation and join our Discord channel.

- Easy onboarding – no deployment or infrastructure needed to get started, simply install Sematic locally and start exploring.

- Local-to-cloud parity – run the same code on your local laptop and on your Kubernetes cluster.

- End-to-end traceability – all pipeline artifacts are persisted, tracked, and visualizable in a web dashboard.

- Access heterogeneous compute – customize required resources for each pipeline step to optimize your performance and cloud footprint (CPUs, memory, GPUs, Spark cluster, etc.)

- Reproducibility – rerun your pipelines from the UI with guaranteed reproducibility of results

To get started locally, simply install Sematic in your Python environment:

$ pip install sematicStart the local web dashboard:

$ sematic startRun an example pipeline:

$ sematic run examples/mnist/pytorchCreate a new boilerplate project:

$ sematic new my_new_projectOr from an existing example: